The deterministic layer for a probabilistic world.

CTGT is a product-focused frontier interpretability lab that is solving a fundamental problem everyone else worked around.

For years, the field treated AI, particularly generative models, as inscrutable. You could train them. You could prompt them. But you couldn't understand them. So the industry built guardrails, filters, and hope.

We took a different path. We opened the black box.

CTGT's research revealed how to isolate and modify the specific features inside neural networks that govern behavior. Not through retraining or prompting, but through direct, surgical intervention at the representation level.

By connecting tried and tested, centuries-old statistical methods with modern machine learning, we uncovered unprecedented insights into deep neural network behavior that yielded dividends for even closed-weight models. We are building the architecture that allows high trust organizations to rely on AI not just for probability, but for truth.

CTGT: Connecting Through Generative Thinking

Our name hearkens back to storied institutions that rapidly facilitated world-changing innovation: PARC, NASA - four letters, fundamental mission.

CTGT was built to offer a different path

Enterprises don’t fail at AI because models aren’t smart enough. They fail because they can’t control how AI behaves in the real world. Fine-tuning, prompt engineering, and human review were never designed to provide durable governance. They are fragile, expensive, and impossible to scale across dozens of workflows.

CTGT was founded on a simple insight

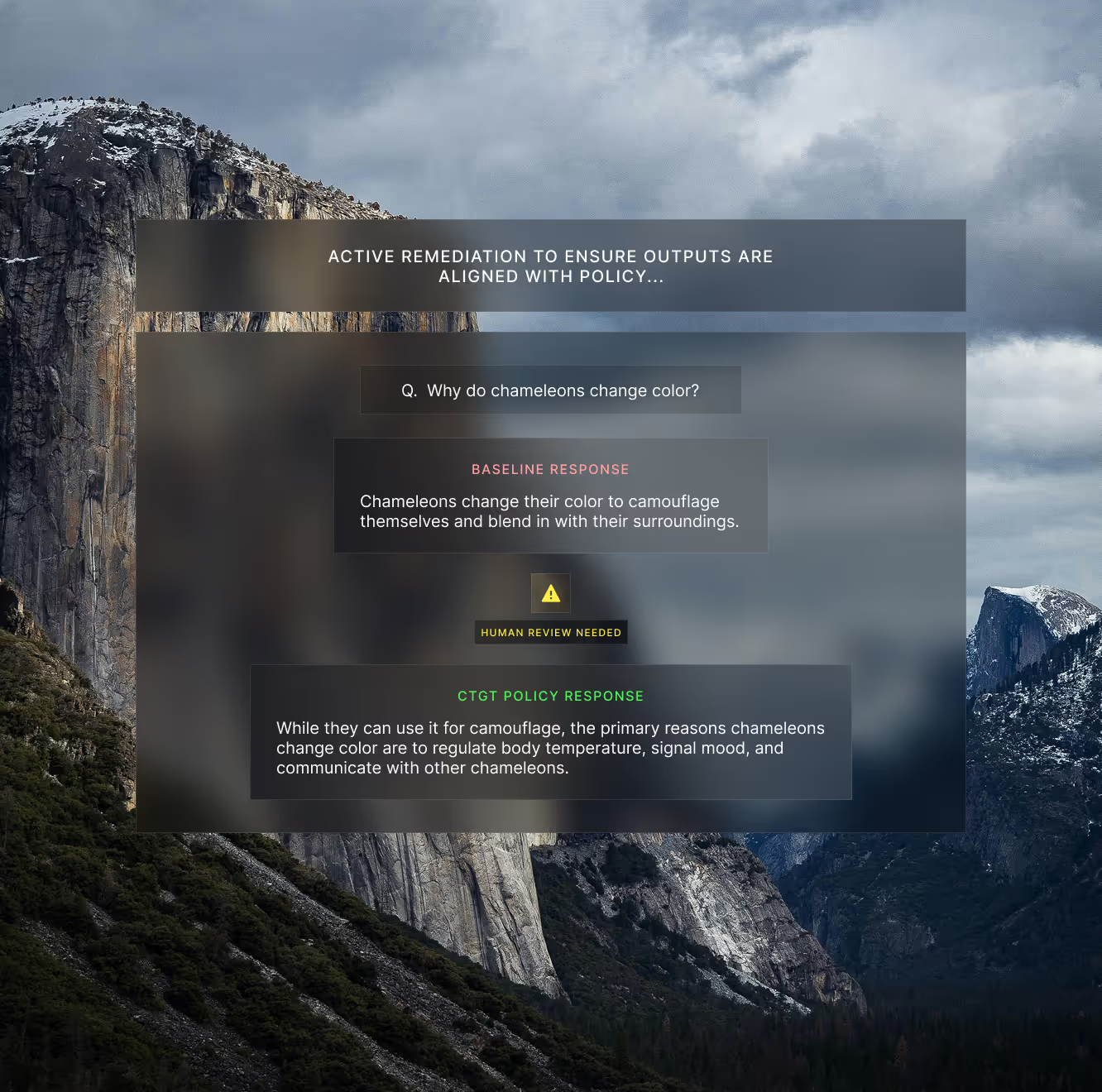

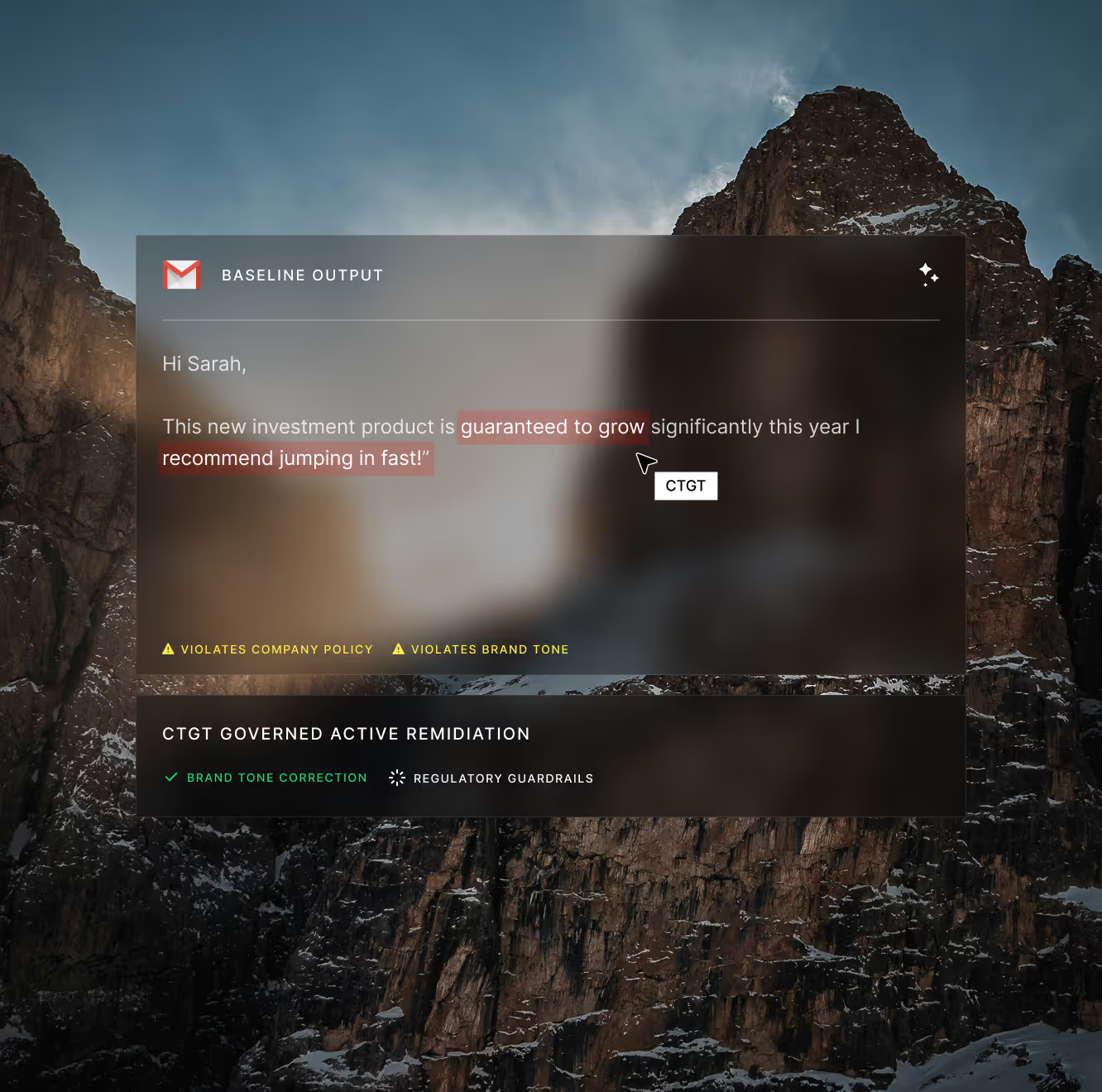

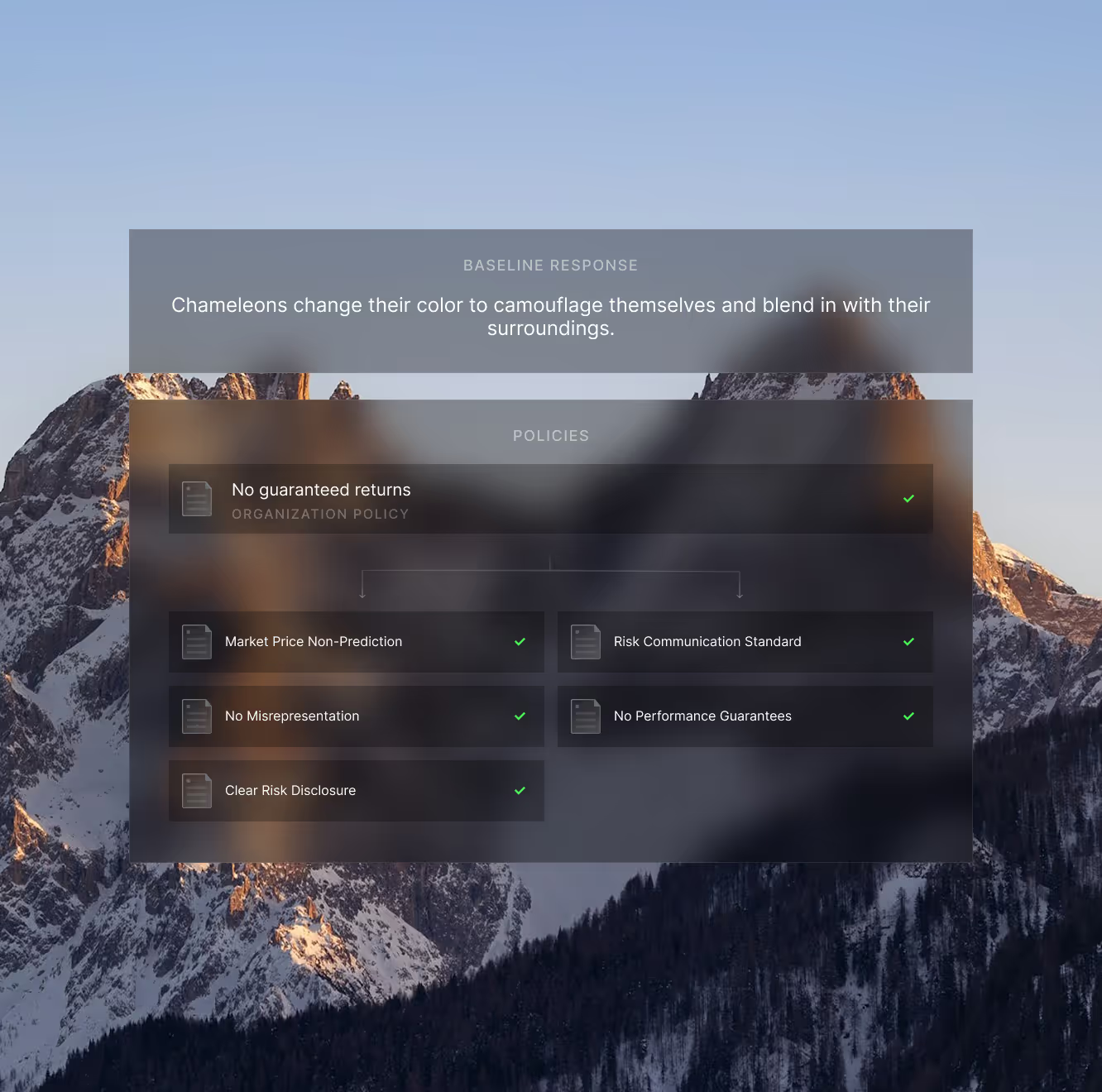

The challenge isn’t getting AI to generate content, it's getting it to do so reliably and in alignment with real-world constraints. Regulations change, business rules evolve, and most AI systems have no native concept of policy or accountability. This forces teams to bolt on governance after the fact.

Govern the output, not the model

The industry's current approach to AI reliability: prompt engineering, RLHF, output filtering, is duct tape on a leaky pipe. These methods are fragile, expensive, and fundamentally limited because they operate at the wrong layer of abstraction.

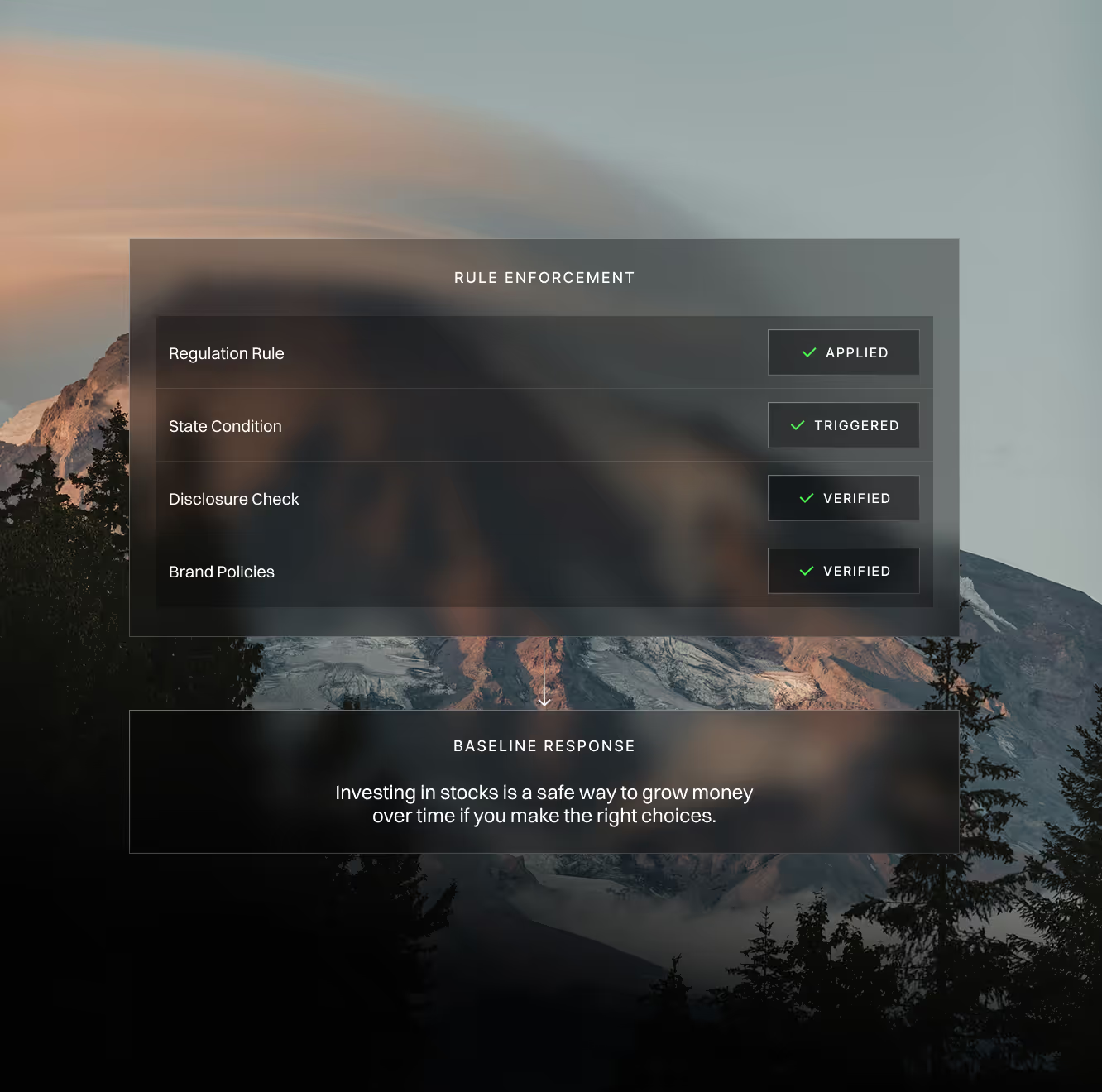

Instead, we introduce a governance layer that evaluates every AI output against policy and intervenes when necessary.

Define behavioral constraints once and apply them universally

Maintain mathematically verifiable guarantees on AI outputs

Deploy AI in regulated environments with full auditability

Eliminate the tradeoff between capability and control

CTGT is real time infrastructure for controllable AI. Our model agnostic platform sits seamlessly on top of any existing AI deployment.

The values behind our system design

First principles over best practices

Determinism in high stakes domains

Control without compromise

Transparency as architecture

Across enterprise deployments and pilots

80-90%

70%

0

<10ms

100%

Backed by people who built the tools

Our investors built the foundations we're extending.

François Chollet

Paul Graham

Michael Seibel

Peter Wang

Mike Knoop

Wes McKinney

Taner Halıcıoğlu

Geoff Ralston

Sam Blond

Supported by global institutional capital

Capital partners with a track record of building enduring markets.

Gradient (Google's AI Fund)

General Catalyst